Why `vllm serve` Works on Day Zero (and What It Takes to Make It Fast)

A deep dive into vLLM’s tiered model integration — from the Transformers fallback that enables zero-day support to the native integration path that makes it fast.

A deep dive into vLLM’s tiered model integration — from the Transformers fallback that enables zero-day support to the native integration path that makes it fast.

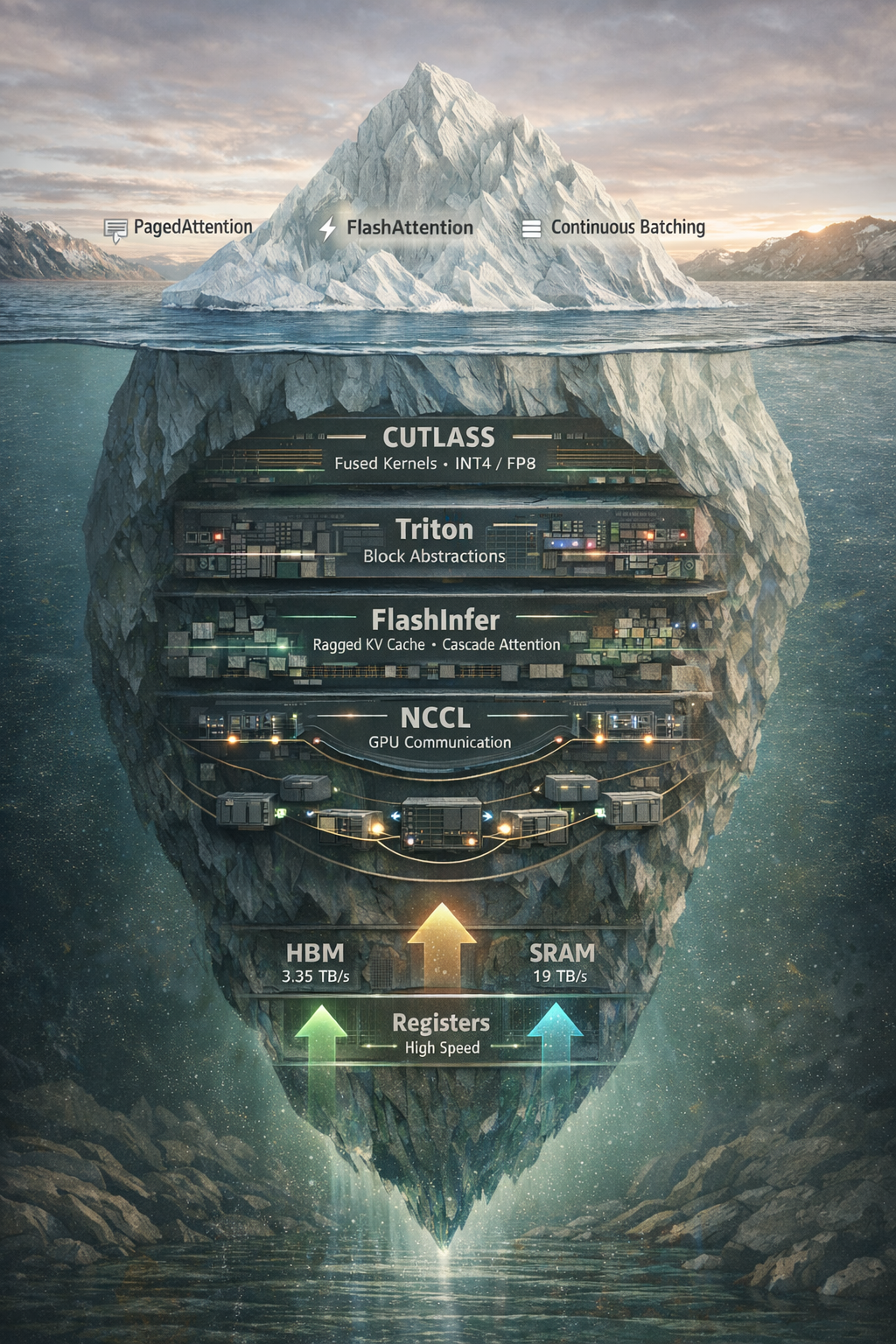

Beyond vLLM and PagedAttention: exploring NCCL, CUTLASS, Triton, and FlashInfer, the libraries that actually make LLM inference fast.