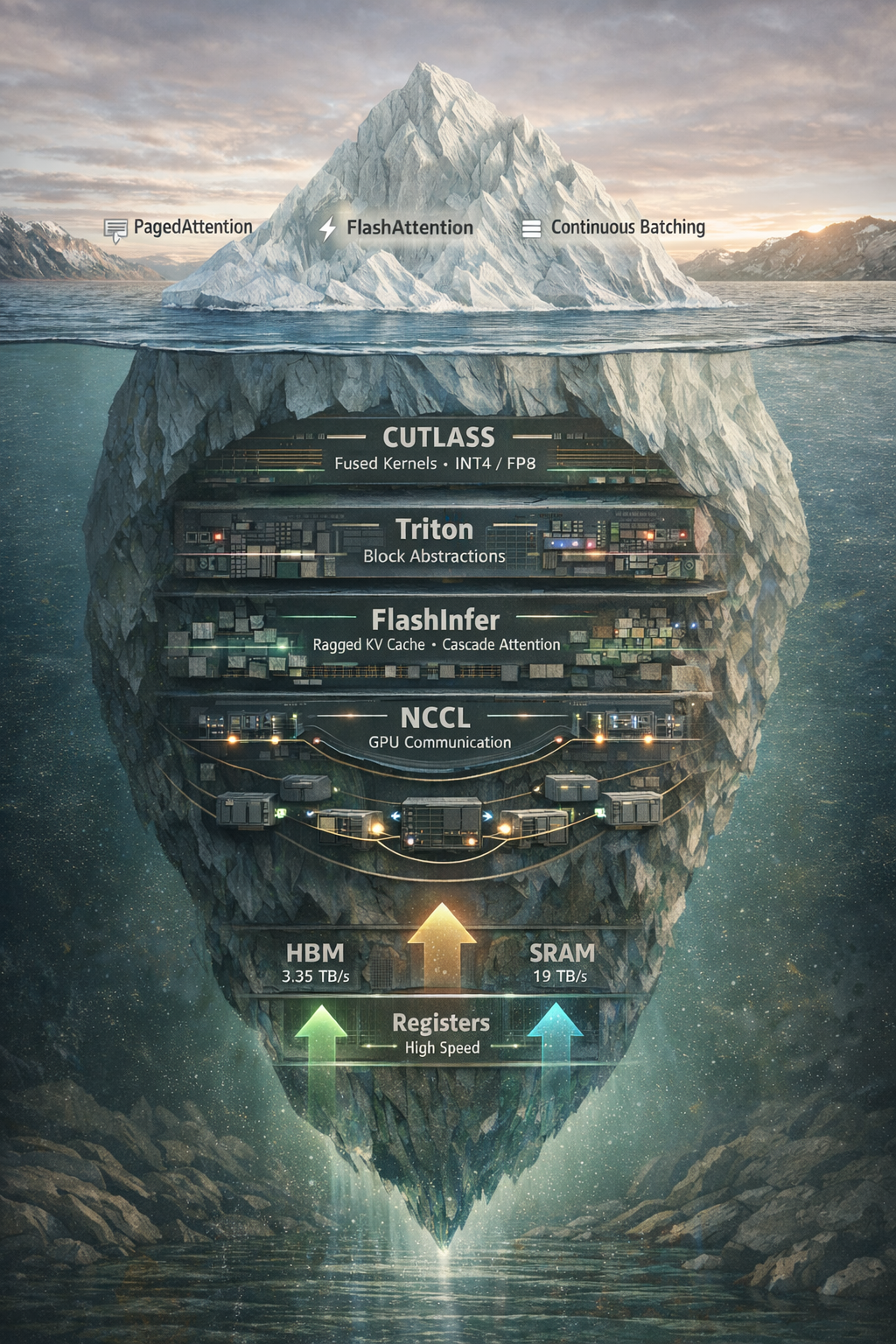

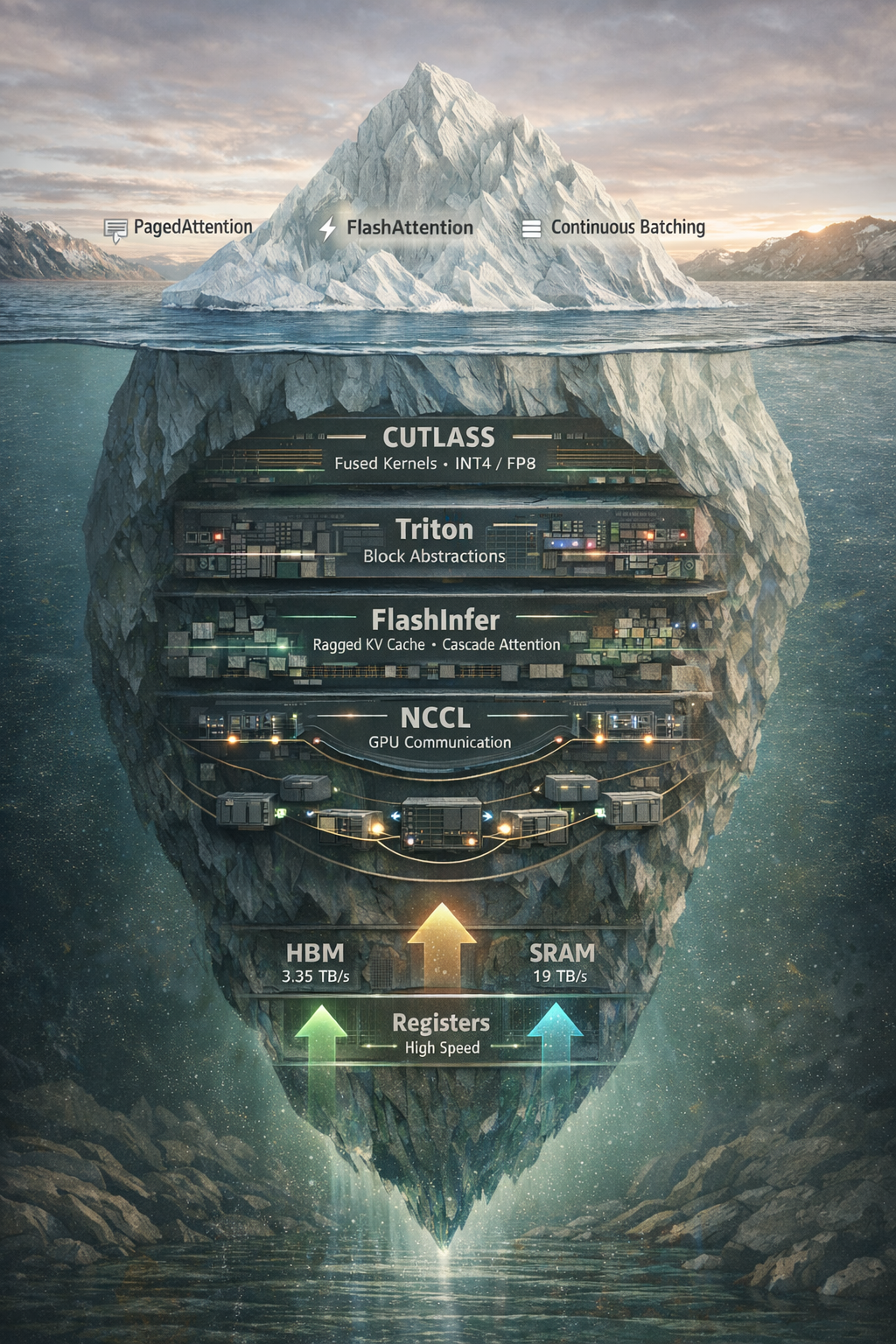

Beyond vLLM and PagedAttention: exploring NCCL, CUTLASS, Triton, and FlashInfer, the libraries that actually make LLM inference fast.

Beyond vLLM and PagedAttention: exploring NCCL, CUTLASS, Triton, and FlashInfer, the libraries that actually make LLM inference fast.

How speculative decoding achieves 2-3× inference speedup without changing model outputs, and why GLM-4.7’s native multi-token prediction marks a paradigm shift.

A deep dive into the architecture, design patterns, and engineering decisions behind production-grade agentic code assist solutions. By dissecting OpenHands, we uncover how to build AI agents that safely execute code, manage complex state, and operate reliably in production.

QUIP# algorithm for quantizing LLM weights without gradient information.

How 4–8x compression and Hessian-guided GPTQ make 70B-scale models practical on modest hardware—what INT8/INT4 really cost, and when accuracy holds.

Flash Attention, a memory-efficient attention mechanism for transformers.

A deep dive into NVIDIA’s H100 architecture and the monitoring techniques required for production-grade LLM inference optimization.

How LMCache Turns KV Cache into Composable LEGO Blocks